Benefits of quantilope's A/B Pre-Roll Test

Safeguards your advertising investment by pre-testing ads with your consumer

Tests among various audiences for well-rounded feedback

Optimizes individual elements of an ad to increase potential success

Applications of quantilope's A/B Pre-Roll Test

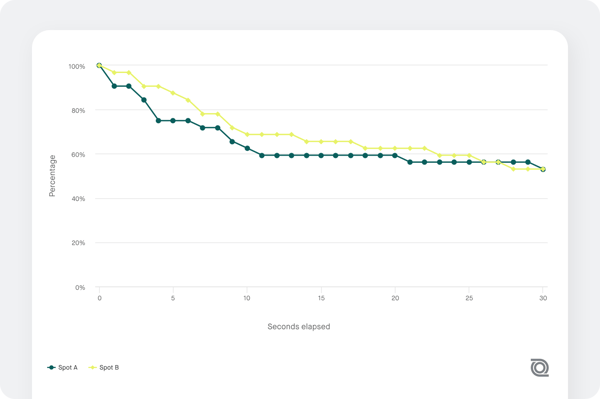

Which advertisement captivates consumers most?

Spot B seems to especially captivate consumers more with a higher view-through rate, especially at the beginning.

Are there certain scenes in my ad that prompt views to ‘skip ad’?

Many consumers chose to skip Spot A between 3 and 4 seconds.

Additional automated methods

Frequently Asked Questions (FAQs):

What does quantilope's A/B Pre-Roll Test measure?

quantilope's A/B Pre-Roll Test measures advertisement performance in digital environments by tracking metrics like view-through rates and identifying specific moments when viewers choose to skip ads.

Should I test different video lengths or different creative hooks in an A/B Pre-Roll Test?

It's usually more strategic to keep the length identical—such as two 15-second spots—and vary only the first 3–5 seconds to isolate which "hook" prevents viewers from hitting the skip button.

How do I ensure my A/B Pre-Roll test results are valid and statistically significant?

Most testing tools use a split-audience setup to ensure that unique users only see one version of the ad, preventing bias and ensuring the data reflects true preference.

Does the call-to-action (CTA) need to be identical in both versions of an A/B Test?

Unless the CTA itself is the variable being tested, it should remain consistent so that differences in performance are attributed solely to the visual or narrative content of the ad.

What happens if the results are "flat" with no clear winner?

A neutral result suggests that the variables tested weren't distinct enough, signaling a need to test more polarized creative directions in the next round of testing (A/B Testing is often an iterative process).

Should I segment an A/B test by device type, such as mobile vs. desktop?

Yes, because viewing habits differ wildly by platform; a "mobile-first" edit with larger text may outperform a cinematic edit on phones, even if the content is identical.